mirror of

https://github.com/lancedb/lancedb.git

synced 2026-05-17 03:50:38 +00:00

docs: assorted copyedits (#1998)

This includes a handful of minor edits I made while reading the docs. In addition to a few spelling fixes, * standardize on "rerank" over "re-rank" in prose * terminate sentences with periods or colons as appropriate * replace some usage of dashes with colons, such as in "Try it yourself - <link>" All changes are surface-level. No changes to semantics or structure. --------- Co-authored-by: Will Jones <willjones127@gmail.com>

This commit is contained in:

@@ -2,7 +2,7 @@

|

||||

====================================================================

|

||||

Adaptive RAG introduces a RAG technique that combines query analysis with self-corrective RAG.

|

||||

|

||||

For Query Analysis, it uses a small classifier(LLM), to decide the query’s complexity. Query Analysis helps routing smoothly to adjust between different retrieval strategies No retrieval, Single-shot RAG or Iterative RAG.

|

||||

For Query Analysis, it uses a small classifier(LLM), to decide the query’s complexity. Query Analysis guides adjustment between different retrieval strategies: No retrieval, Single-shot RAG or Iterative RAG.

|

||||

|

||||

**[Official Paper](https://arxiv.org/pdf/2403.14403)**

|

||||

|

||||

@@ -12,9 +12,9 @@ For Query Analysis, it uses a small classifier(LLM), to decide the query’s com

|

||||

</figcaption>

|

||||

</figure>

|

||||

|

||||

**[Offical Implementation](https://github.com/starsuzi/Adaptive-RAG)**

|

||||

**[Official Implementation](https://github.com/starsuzi/Adaptive-RAG)**

|

||||

|

||||

Here’s a code snippet for query analysis

|

||||

Here’s a code snippet for query analysis:

|

||||

|

||||

```python

|

||||

from langchain_core.prompts import ChatPromptTemplate

|

||||

@@ -35,7 +35,7 @@ llm = ChatOpenAI(model="gpt-3.5-turbo-0125", temperature=0)

|

||||

structured_llm_router = llm.with_structured_output(RouteQuery)

|

||||

```

|

||||

|

||||

For defining and querying retriever

|

||||

The following example defines and queries a retriever:

|

||||

|

||||

```python

|

||||

# add documents in LanceDB

|

||||

@@ -48,4 +48,4 @@ retriever = vectorstore.as_retriever()

|

||||

# query using defined retriever

|

||||

question = "How adaptive RAG works"

|

||||

docs = retriever.get_relevant_documents(question)

|

||||

```

|

||||

```

|

||||

|

||||

@@ -11,7 +11,7 @@ FLARE, stands for Forward-Looking Active REtrieval augmented generation is a gen

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/examples/better-rag-FLAIR/main.ipynb)

|

||||

|

||||

Here’s a code snippet for using FLARE with Langchain

|

||||

Here’s a code snippet for using FLARE with Langchain:

|

||||

|

||||

```python

|

||||

from langchain.vectorstores import LanceDB

|

||||

@@ -35,4 +35,4 @@ flare = FlareChain.from_llm(llm=llm,retriever=vector_store_retriever,max_generat

|

||||

result = flare.run(input_text)

|

||||

```

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/examples/better-rag-FLAIR/main.ipynb)

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/examples/better-rag-FLAIR/main.ipynb)

|

||||

|

||||

@@ -11,7 +11,7 @@ HyDE, stands for Hypothetical Document Embeddings is an approach used for precis

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/examples/Advance-RAG-with-HyDE/main.ipynb)

|

||||

|

||||

Here’s a code snippet for using HyDE with Langchain

|

||||

Here’s a code snippet for using HyDE with Langchain:

|

||||

|

||||

```python

|

||||

from langchain.llms import OpenAI

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

**Agentic RAG 🤖**

|

||||

====================================================================

|

||||

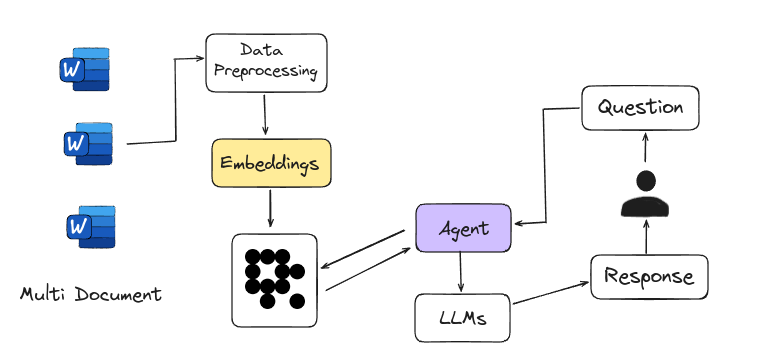

Agentic RAG is Agent-based RAG introduces an advanced framework for answering questions by using intelligent agents instead of just relying on large language models. These agents act like expert researchers, handling complex tasks such as detailed planning, multi-step reasoning, and using external tools. They navigate multiple documents, compare information, and generate accurate answers. This system is easily scalable, with each new document set managed by a sub-agent, making it a powerful tool for tackling a wide range of information needs.

|

||||

Agentic RAG introduces an advanced framework for answering questions by using intelligent agents instead of just relying on large language models. These agents act like expert researchers, handling complex tasks such as detailed planning, multi-step reasoning, and using external tools. They navigate multiple documents, compare information, and generate accurate answers. This system is easily scalable, with each new document set managed by a sub-agent, making it a powerful tool for tackling a wide range of information needs.

|

||||

|

||||

<figure markdown="span">

|

||||

|

||||

@@ -9,7 +9,7 @@ Agentic RAG is Agent-based RAG introduces an advanced framework for answering qu

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/tutorials/Agentic_RAG/main.ipynb)

|

||||

|

||||

Here’s a code snippet for defining retriever using Langchain

|

||||

Here’s a code snippet for defining retriever using Langchain:

|

||||

|

||||

```python

|

||||

from langchain.text_splitter import RecursiveCharacterTextSplitter

|

||||

@@ -41,7 +41,7 @@ retriever = vectorstore.as_retriever()

|

||||

|

||||

```

|

||||

|

||||

Agent that formulates an improved query for better retrieval results and then grades the retrieved documents

|

||||

Here is an agent that formulates an improved query for better retrieval results and then grades the retrieved documents:

|

||||

|

||||

```python

|

||||

def grade_documents(state) -> Literal["generate", "rewrite"]:

|

||||

@@ -98,4 +98,4 @@ def rewrite(state):

|

||||

return {"messages": [response]}

|

||||

```

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/tutorials/Agentic_RAG/main.ipynb)

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/tutorials/Agentic_RAG/main.ipynb)

|

||||

|

||||

@@ -4,7 +4,7 @@

|

||||

Corrective-RAG (CRAG) is a strategy for Retrieval-Augmented Generation (RAG) that includes self-reflection and self-grading of retrieved documents. Here’s a simplified breakdown of the steps involved:

|

||||

|

||||

1. **Relevance Check**: If at least one document meets the relevance threshold, the process moves forward to the generation phase.

|

||||

2. **Knowledge Refinement**: Before generating an answer, the process refines the knowledge by dividing the document into smaller segments called "knowledge strips."

|

||||

2. **Knowledge Refinement**: Before generating an answer, the process refines the knowledge by dividing the document into smaller segments called "knowledge strips".

|

||||

3. **Grading and Filtering**: Each "knowledge strip" is graded, and irrelevant ones are filtered out.

|

||||

4. **Additional Data Source**: If all documents are below the relevance threshold, or if the system is unsure about their relevance, it will seek additional information by performing a web search to supplement the retrieved data.

|

||||

|

||||

@@ -19,11 +19,11 @@ Above steps are mentioned in

|

||||

|

||||

Corrective Retrieval-Augmented Generation (CRAG) is a method that works like a **built-in fact-checker**.

|

||||

|

||||

**[Offical Implementation](https://github.com/HuskyInSalt/CRAG)**

|

||||

**[Official Implementation](https://github.com/HuskyInSalt/CRAG)**

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/tutorials/Corrective-RAG-with_Langgraph/CRAG_with_Langgraph.ipynb)

|

||||

|

||||

Here’s a code snippet for defining a table with the [Embedding API](https://lancedb.github.io/lancedb/embeddings/embedding_functions/), and retrieves the relevant documents.

|

||||

Here’s a code snippet for defining a table with the [Embedding API](https://lancedb.github.io/lancedb/embeddings/embedding_functions/), and retrieves the relevant documents:

|

||||

|

||||

```python

|

||||

import pandas as pd

|

||||

@@ -115,6 +115,6 @@ def grade_documents(state):

|

||||

}

|

||||

```

|

||||

|

||||

Check Colab for the Implementation of CRAG with Langgraph

|

||||

Check Colab for the Implementation of CRAG with Langgraph:

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/tutorials/Corrective-RAG-with_Langgraph/CRAG_with_Langgraph.ipynb)

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/tutorials/Corrective-RAG-with_Langgraph/CRAG_with_Langgraph.ipynb)

|

||||

|

||||

@@ -6,7 +6,7 @@ One of the main benefits of Graph RAG is its ability to capture and represent co

|

||||

|

||||

**[Official Paper](https://arxiv.org/pdf/2404.16130)**

|

||||

|

||||

**[Offical Implementation](https://github.com/microsoft/graphrag)**

|

||||

**[Official Implementation](https://github.com/microsoft/graphrag)**

|

||||

|

||||

[Microsoft Research Blog](https://www.microsoft.com/en-us/research/blog/graphrag-unlocking-llm-discovery-on-narrative-private-data/)

|

||||

|

||||

@@ -39,16 +39,16 @@ python3 -m graphrag.index --root dataset-dir

|

||||

|

||||

- **Execute Query**

|

||||

|

||||

Global Query Execution gives a broad overview of dataset

|

||||

Global Query Execution gives a broad overview of dataset:

|

||||

|

||||

```bash

|

||||

python3 -m graphrag.query --root dataset-dir --method global "query-question"

|

||||

```

|

||||

|

||||

Local Query Execution gives a detailed and specific answers based on the context of the entities

|

||||

Local Query Execution gives a detailed and specific answers based on the context of the entities:

|

||||

|

||||

```bash

|

||||

python3 -m graphrag.query --root dataset-dir --method local "query-question"

|

||||

```

|

||||

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/examples/Graphrag/main.ipynb)

|

||||

[](https://colab.research.google.com/github/lancedb/vectordb-recipes/blob/main/examples/Graphrag/main.ipynb)

|

||||

|

||||

@@ -15,7 +15,7 @@ MRAG is cost-effective and energy-efficient because it avoids extra LLM queries,

|

||||

|

||||

**[Official Implementation](https://github.com/spcl/MRAG)**

|

||||

|

||||

Here’s a code snippet for defining different embedding spaces with the [Embedding API](https://lancedb.github.io/lancedb/embeddings/embedding_functions/)

|

||||

Here’s a code snippet for defining different embedding spaces with the [Embedding API](https://lancedb.github.io/lancedb/embeddings/embedding_functions/):

|

||||

|

||||

```python

|

||||

import lancedb

|

||||

@@ -44,6 +44,6 @@ class Space3(LanceModel):

|

||||

vector: Vector(model3.ndims()) = model3.VectorField()

|

||||

```

|

||||

|

||||

Create different tables using defined embedding spaces, then make queries to each embedding space. Use the resulted closest documents from each embedding space to generate answers.

|

||||

Create different tables using defined embedding spaces, then make queries to each embedding space. Use the resulting closest documents from each embedding space to generate answers.

|

||||

|

||||

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

**Self RAG 🤳**

|

||||

====================================================================

|

||||

Self-RAG is a strategy for Retrieval-Augmented Generation (RAG) to get better retrieved information, generated text, and checking their own work, all without losing their flexibility. Unlike the traditional Retrieval-Augmented Generation (RAG) method, Self-RAG retrieves information as needed, can skip retrieval if not needed, and evaluates its own output while generating text. It also uses a process to pick the best output based on different preferences.

|

||||

Self-RAG is a strategy for Retrieval-Augmented Generation (RAG) to get better retrieved information, generated text, and validation, without loss of flexibility. Unlike the traditional Retrieval-Augmented Generation (RAG) method, Self-RAG retrieves information as needed, can skip retrieval if not needed, and evaluates its own output while generating text. It also uses a process to pick the best output based on different preferences.

|

||||

|

||||

**[Official Paper](https://arxiv.org/pdf/2310.11511)**

|

||||

|

||||

@@ -10,11 +10,11 @@ Self-RAG is a strategy for Retrieval-Augmented Generation (RAG) to get better re

|

||||

</figcaption>

|

||||

</figure>

|

||||

|

||||

**[Offical Implementation](https://github.com/AkariAsai/self-rag)**

|

||||

**[Official Implementation](https://github.com/AkariAsai/self-rag)**

|

||||

|

||||

Self-RAG starts by generating a response without retrieving extra info if it's not needed. For questions that need more details, it retrieves to get the necessary information.

|

||||

|

||||

Here’s a code snippet for defining retriever using Langchain

|

||||

Here’s a code snippet for defining retriever using Langchain:

|

||||

|

||||

```python

|

||||

from langchain.text_splitter import RecursiveCharacterTextSplitter

|

||||

@@ -46,7 +46,7 @@ retriever = vectorstore.as_retriever()

|

||||

|

||||

```

|

||||

|

||||

Functions that grades the retrieved documents and if required formulates an improved query for better retrieval results

|

||||

The following functions grade the retrieved documents and formulate an improved query for better retrieval results, if required:

|

||||

|

||||

```python

|

||||

def grade_documents(state) -> Literal["generate", "rewrite"]:

|

||||

@@ -93,4 +93,4 @@ def rewrite(state):

|

||||

model = ChatOpenAI(temperature=0, model="gpt-4-0125-preview", streaming=True)

|

||||

response = model.invoke(msg)

|

||||

return {"messages": [response]}

|

||||

```

|

||||

```

|

||||

|

||||

@@ -1,8 +1,8 @@

|

||||

**SFR RAG 📑**

|

||||

====================================================================

|

||||

Salesforce AI Research introduces SFR-RAG, a 9-billion-parameter language model trained with a significant emphasis on reliable, precise, and faithful contextual generation abilities specific to real-world RAG use cases and relevant agentic tasks. They include precise factual knowledge extraction, distinguishing relevant against distracting contexts, citing appropriate sources along with answers, producing complex and multi-hop reasoning over multiple contexts, consistent format following, as well as refraining from hallucination over unanswerable queries.

|

||||

Salesforce AI Research introduced SFR-RAG, a 9-billion-parameter language model trained with a significant emphasis on reliable, precise, and faithful contextual generation abilities specific to real-world RAG use cases and relevant agentic tasks. It targets precise factual knowledge extraction, distinction between relevant and distracting contexts, citation of appropriate sources along with answers, production of complex and multi-hop reasoning over multiple contexts, consistent format following, as well as minimization of hallucination over unanswerable queries.

|

||||

|

||||

**[Offical Implementation](https://github.com/SalesforceAIResearch/SFR-RAG)**

|

||||

**[Official Implementation](https://github.com/SalesforceAIResearch/SFR-RAG)**

|

||||

|

||||

<figure markdown="span">

|

||||

|

||||

|

||||

Reference in New Issue

Block a user