We currently cannot drop tenant before removing it's directory, or use

Tenant::drop for this. This creates unnecessary or inactionable warnings

during detach at least. Silence the most typical, file not found. Log

remaining at `error!`.

Cc: #2442

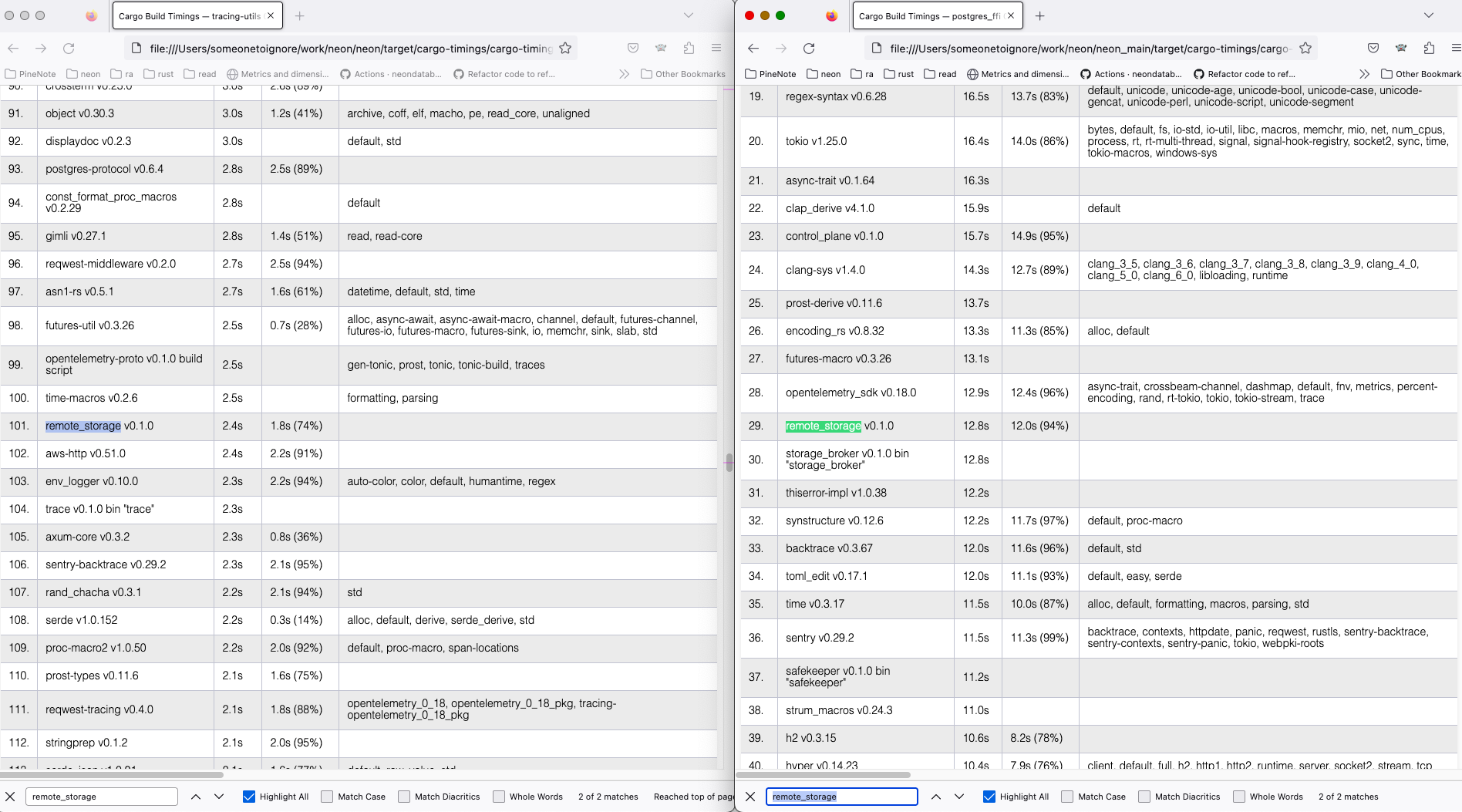

We don't know how our s3 remote_storage is performing, or if it's

blocking the shutdown. Well, for sampling reasons, we will not really

know even after this PR.

Add metrics:

- align remote_storage metrics towards #4813 goals

- histogram

`remote_storage_s3_request_seconds{request_type=(get_object|put_object|delete_object|list_objects),

result=(ok|err|cancelled)}`

- histogram `remote_storage_s3_wait_seconds{request_type=(same kinds)}`

- counter `remote_storage_s3_cancelled_waits_total{request_type=(same

kinds)}`

Follow-up work:

- After release, remove the old metrics, migrate dashboards

Histogram buckets are rough guesses, need to be tuned. In pageserver we

have a download timeout of 120s, so I think the 100s bucket is quite

nice.

## Problem

The current output from a prod binary at startup is:

```

git-env:765455bca22700e49c053d47f44f58a6df7c321f failpoints: true, features: [] launch_timestamp: 2023-08-02 10:30:35.545217477 UTC

```

It's confusing to read that line, then read the code and think "if

failpoints is true, but not in the features list, what does that mean?".

As far as I can tell, the check of `fail/failpoints` is just always

false because cargo doesn't expose features across crates like this: the

`fail/failpoints` syntax works in the cargo CLI but not from a macro in

some crate other than `fail`.

## Summary of changes

Remove the lines that try to check `fail/failpoints` from the pageserver

entrypoint module. This has no functional impact but makes the code

slightly easier to understand when trying to make sense of the line

printed on startup.

## Problem

Pre-requisites for #4852 and #4853

## Summary of changes

1. Includes the client's IP address (which we already log) with the span

info so we can have it on all associated logs. This makes making

dashboards based on IP addresses easier.

2. Switch to a consistent error/warning log for errors during

connection. This includes error, num_retries, retriable=true/false and a

consistent log message that we can grep for.

## Problem

The functions `DeltaLayer::load_inner` and `ImageLayer::load_inner` are

calling `read_blk` internally, which we would like to turn into an async

fn.

## Summary of changes

We switch from `once_cell`'s `OnceCell` implementation to the one in

`tokio` in order to be able to call an async `get_or_try_init` function.

Builds on top of #4839, part of #4743

During deploys of 2023-08-03 we logged too much on shutdown. Fix the

logging by timing each top level shutdown step, and possibly warn on it

taking more than a rough threshold, based on how long I think it

possibly should be taking. Also remove all shutdown logging from

background tasks since there is already "shutdown is taking a long time"

logging.

Co-authored-by: John Spray <john@neon.tech>

## Problem

`DiskBtreeReader::get` and `DiskBtreeReader::visit` both call `read_blk`

internally, which we would like to make async in the future. This PR

focuses on making the interface of these two functions `async`. There is

further work to be done in forms of making `visit` to not be recursive

any more, similar to #4838. For that, see

https://github.com/neondatabase/neon/pull/4884.

Builds on top of https://github.com/neondatabase/neon/pull/4839, part of

https://github.com/neondatabase/neon/issues/4743

## Summary of changes

Make `DiskBtreeReader::get` and `DiskBtreeReader::visit` async functions

and `await` in the places that call these functions.

## Problem

When setting up for the first time I hit a couple of nits running tests:

- It wasn't obvious that `openssl` and `poetry` were needed (poetry is

mentioned kind of obliquely via "dependency installation notes" rather

than being in the list of rpm/deb packages to install.

- It wasn't obvious how to get the tests to run for just particular

parameters (e.g. just release mode)

## Summary of changes

Add openssl and poetry to the package lists.

Add an example of how to run pytest for just a particular build type and

postgres version.

## Problem

neon_fixtures.py has grown to unmanageable size. It attracts conflicts.

When adding specific utils under for example `fixtures/pageserver`

things sometimes need to import stuff from `neon_fixtures.py` which

creates circular import. This is usually only needed for type

annotations, so `typing.TYPE_CHECKING` flag can mask the issue.

Nevertheless I believe that splitting neon_fixtures.py into smaller

parts is a better approach.

Currently the PR contains small things, but I plan to continue and move

NeonEnv to its own `fixtures.env` module. To keep the diff small I think

this PR can already be merged to cause less conflicts.

UPD: it looks like currently its not really possible to fully avoid

usage of `typing.TYPE_CHECKING`, because some components directly depend

on each other. I e Env -> Cli -> Env cycle. But its still worth it to

avoid it in as many places as possible. And decreasing neon_fixture's

size still makes sense.

## Problem

If AWS credentials are not set locally (via

AWS_ACCESS_KEY_ID/AWS_SECRET_ACCESS_KEY env vars)

`test_remote_library[release-pg15-mock_s3]` test fails with the

following error:

```

ERROR could not start the compute node: Failed to download a remote file: Failed to download S3 object: failed to construct request

```

## Summary of changes

- set AWS credentials for endpoints programmatically

## Problem

The k-merge in pageserver compaction currently relies on iterators over

the keys and also over the values. This approach does not support async

code because we are using iterators and those don't support async in

general. Also, the k-merge implementation we use doesn't support async

either. Instead, as we already load all the keys into memory, just do

sorting in-memory.

## Summary of changes

The PR can be read commit-by-commit, but most importantly, it:

* Stops using kmerge in compaction, using slice sorting instead.

* Makes `load_keys` and `load_val_refs` async, using `Handle::block_on`

in the compaction code as we don't want to turn the compaction function,

called inside `spawn_blocking`, into an async fn.

Builds on top of #4836, part of

https://github.com/neondatabase/neon/issues/4743

## Problem

Error messages like this coming up during normal operations:

```

Compaction failed, retrying in 2s: timeline is Stopping

Compaction failed, retrying in 2s: Cannot run compaction iteration on inactive tenant

```

## Summary of changes

Add explicit handling for the shutdown case in these locations, to

suppress error logs.

as needed since #4715 or this will happen:

```

ERROR panic{thread=main location=.../hyper-rustls-0.23.2/src/config.rs:48:9}: no CA certificates found

```

Add infrastructure to dynamically load postgres extensions and shared libraries from remote extension storage.

Before postgres start downloads list of available remote extensions and libraries, and also downloads 'shared_preload_libraries'. After postgres is running, 'compute_ctl' listens for HTTP requests to load files.

Postgres has new GUC 'extension_server_port' to specify port on which 'compute_ctl' listens for requests.

When PostgreSQL requests a file, 'compute_ctl' downloads it.

See more details about feature design and remote extension storage layout in docs/rfcs/024-extension-loading.md

---------

Co-authored-by: Anastasia Lubennikova <anastasia@neon.tech>

Co-authored-by: Alek Westover <alek.westover@gmail.com>

Two stabs at this, by mocking a http receiver and the globals out (now

reverted) and then by separating the timeline dependency and just

testing what kind of events certain timelines produce. I think this

pattern could work for some of our problems.

Follow-up to #4822.

## Problem

When the eviction threshold is an integer multiple of the eviction

period, it is unreliable to skip imitating accesses based on whether the

last imitation was more recent than the threshold.

This is because as finite time passes

between the time used for the periodic execution, and the 'now' time

used for updating last_layer_access_imitation. When this is just a few

milliseconds, and everything else is on-time, then a 5 second threshold

with a 1 second period will end up entering its 5th iteration slightly

_less than_ 5 second since last_layer_access_imitation, and thereby

skipping instead of running the imitation. If a few milliseconds then

pass before we check the access time of a file that _should_ have been

bumped by the imitation pass, then we end up evicting something we

shouldn't have evicted.

## Summary of changes

We can make this race far less likely by using the threshold minus one

interval as the period for re-executing the imitate_layer_accesses: that

way we're not vulnerable to racing by just a few millis, and there would

have to be a delay of the order `period` to cause us to wrongly evict a

layer.

This is not a complete solution: it would be good to revisit this and

use a non-walltime mechanism for pinning these layers into local

storage, rather than relying on bumping access times.

## Problem

The k-merge in pageserver compaction currently relies on iterators over

the keys and also over the values. This approach does not support async

code because we are using iterators and those don't support async in

general. Also, the k-merge implementation we use doesn't support async

either. Instead, as we already load all the keys into memory, the plan

is to just do the sorting in-memory for now, switch to async, and then

once we want to support workloads that don't have all keys stored in

memory, we can look into switching to a k-merge implementation that

supports async instead.

## Summary of changes

The core of this PR is the move from functions on the `PersistentLayer`

trait to return custom iterator types to inherent functions on `DeltaLayer`

that return buffers with all keys or value references.

Value references are a type we created in this PR, containing a

`BlobRef` as well as an `Arc` pointer to the `DeltaLayerInner`, so that

we can lazily load the values during compaction. This preserves the

property of the current code.

This PR does not switch us to doing the k-merge via sort on slices, but

with this PR, doing such a switch is relatively easy and only requires

changes of the compaction code itself.

Part of https://github.com/neondatabase/neon/issues/4743

## Problem

See https://neondb.slack.com/archives/C036U0GRMRB/p1689148023067319

## Summary of changes

Define NEON_SMGR in smgr.h

## Checklist before requesting a review

- [ ] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

---------

Co-authored-by: Konstantin Knizhnik <knizhnik@neon.tech>

We want to have timeline_written_size_delta which is defined as

difference to the previously sent `timeline_written_size` from the

current `timeline_written_size`.

Solution is to send it. On the first round `disk_consistent_lsn` is used

which is captured during `load` time. After that an incremental "event"

is sent on every collection. Incremental "events" are not part of

deduplication.

I've added some infrastructure to allow somewhat typesafe

`EventType::Absolute` and `EventType::Incremental` factories per

metrics, now that we have our first `EventType::Incremental` usage.

## Problem

Compatibility tests fail from time to time due to `pg_tenant_only_port`

port collision (added in https://github.com/neondatabase/neon/pull/4731)

## Summary of changes

- replace `pg_tenant_only_port` value in config with new port

- remove old logic, than we don't need anymore

- unify config overrides

## Problem

Wrong use of `conf.listen_pg_addr` in `error!()`.

## Summary of changes

Use `listen_pg_addr_tenant_only` instead of `conf.listen_pg_addr`.

Signed-off-by: yaoyinnan <35447132+yaoyinnan@users.noreply.github.com>

## Problem

1. In the CacheInvalid state loop, we weren't checking the

`num_retries`. If this managed to get up to `32`, the retry_after

procedure would compute 2^32 which would overflow to 0 and trigger a div

by zero

2. When fixing the above, I started working on a flow diagram for the

state machine logic and realised it was more complex than it had to be:

a. We start in a `Cached` state

b. `Cached`: call `connect_once`. After the first connect_once error, we

always move to the `CacheInvalid` state, otherwise, we return the

connection.

c. `CacheInvalid`: we attempt to `wake_compute` and we either switch to

Cached or we retry this step (or we error).

d. `Cached`: call `connect_once`. We either retry this step or we have a

connection (or we error) - After num_retries > 1 we never switch back to

`CacheInvalid`.

## Summary of changes

1. Insert a `num_retries` check in the `handle_try_wake` procedure. Also

using floats in the retry_after procedure to prevent the overflow

entirely

2. Refactor connect_to_compute to be more linear in design.

## Problem

Existing IndexPart unit tests only exercised the version 1 format (i.e.

without deleted_at set).

## Summary of changes

Add a test that sets version to 2, and sets a value for deleted_at.

Closes https://github.com/neondatabase/neon/issues/4162

## Problem

`DiskBtreeReader::dump` calls `read_blk` internally, which we want to

make async in the future. As it is currently relying on recursion, and

async doesn't like recursion, we want to find an alternative to that and

instead traverse the tree using a loop and a manual stack.

## Summary of changes

* Make `DiskBtreeReader::dump` and all the places calling it async

* Make `DiskBtreeReader::dump` non-recursive internally and use a stack

instead. It now deparses the node in each iteration, which isn't

optimal, but on the other hand it's hard to store the node as it is

referencing the buffer. Self referential data are hard in Rust. For a

dumping function, speed isn't a priority so we deparse the node multiple

times now (up to branching factor many times).

Part of https://github.com/neondatabase/neon/issues/4743

I have verified that output is unchanged by comparing the output of this

command both before and after this patch:

```

cargo test -p pageserver -- particular_data --nocapture

```

## Problem

ref https://github.com/neondatabase/neon/pull/4721, ref

https://github.com/neondatabase/neon/issues/4709

## Summary of changes

This PR adds unit tests for wake_compute.

The patch adds a new variant `Test` to auth backends. When

`wake_compute` is called, we will verify if it is the exact operation

sequence we are expecting. The operation sequence now contains 3 more

operations: `Wake`, `WakeRetry`, and `WakeFail`.

The unit tests for proxy connects are now complete and I'll continue

work on WebSocket e2e test in future PRs.

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

## Problem

See: https://neondb.slack.com/archives/C04USJQNLD6/p1689973957908869

## Summary of changes

Bump Postgres version

## Checklist before requesting a review

- [ ] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

---------

Co-authored-by: Konstantin Knizhnik <knizhnik@neon.tech>

## Problem

We have some amount of outdated logic in test_compatibility, that we

don't need anymore.

## Summary of changes

- Remove `PR4425_ALLOWED_DIFF` and tune `dump_differs` method to accept

allowed diffs in the future (a cleanup after

https://github.com/neondatabase/neon/pull/4425)

- Remote etcd related code (a cleanup after

https://github.com/neondatabase/neon/pull/2733)

- Don't set `preserve_database_files`

Run `pg_amcheck` in forward and backward compatibility tests to catch

some data corruption.

## Summary of changes

- Add amcheck compiling to Makefile

- Add `pg_amcheck` to test_compatibility

The test was starting two endpoints on the same branch as discovered by

@petuhovskiy.

The fix is to allow passing branch-name from the python side over to

neon_local, which already accepted it.

Split from #4824, which will handle making this more misuse resistant.

error is not needed because anyhow will have the cause chain reported

anyways.

related to test_neon_cli_basics being flaky, but doesn't actually fix

any flakyness, just the obvious stray `{e}`.

## Problem

wake_compute can fail sometimes but is eligible for retries. We retry

during the main connect, but not during auth.

## Summary of changes

retry wake_compute during auth flow if there was an error talking to

control plane, or if there was a temporary error in waking the compute

node

## Problem

In https://github.com/neondatabase/neon/issues/4743 , I'm trying to make

more of the pageserver async, but in order for that to happen, I need to

be able to persist the result of `ImageLayer::load` across await points.

For that to happen, the return value needs to be `Send`.

## Summary of changes

Use `OnceLock` in the image layer instead of manually implementing it

with booleans, locks and `Option`.

Part of #4743

## Problem

The first session event we emit is after we receive the first startup

packet from the client. This means we can't detect any issues between

TCP open and handling of the first PG packet

## Summary of changes

Add some new logs for websocket upgrade and connection handling

## Problem

We've got an example of Allure reports from 2 different runners for the

same build that started to upload at the exact second, making one

overwrite another

## Summary of changes

- Use the Postgres version to distinguish artifacts (along with the

build type)

We need some real extensions in S3 to accurately test the code for

handling remote extensions.

In this PR we just upload three extensions (anon, kq_imcx and postgis), which is

enough for testing purposes for now. In addition to creating and

uploading the extension archives, we must generate a file

`ext_index.json` which specifies important metadata about the

extensions.

---------

Co-authored-by: Anastasia Lubennikova <anastasia@neon.tech>

Co-authored-by: Alexander Bayandin <alexander@neon.tech>

## Problem

We want to measure how many users are using TCP/WS connections.

We also want to measure how long it takes to establish a connection with

the compute node.

I plan to also add a separate counter for HTTP requests, but because of

pooling this needs to be disambiguated against new HTTP compute

connections

## Summary of changes

* record connection type (ws/tcp) in the connection counters.

* record connection latency including retry latency

## Problem

Currently we delete local files first, so if pageserver restarts after

local files deletion then remote deletion is not continued. This can be

solved with inversion of these steps.

But even if these steps are inverted when index_part.json is deleted

there is no way to distinguish between "this timeline is good, we just

didnt upload it to remote" and "this timeline is deleted we should

continue with removal of local state". So to solve it we use another

mark file. After index part is deleted presence of this mark file

indentifies that it was a deletion intention.

Alternative approach that was discussed was to delete all except

metadata first, and then delete metadata and index part. In this case we

still do not support local only configs making them rather unsafe

(deletion in them is already unsafe, but this direction solidifies this

direction instead of fixing it). Another downside is that if we crash

after local metadata gets removed we may leave dangling index part on

the remote which in theory shouldnt be a big deal because the file is

small.

It is not a big change to choose another approach at this point.

## Summary of changes

Timeline deletion sequence:

1. Set deleted_at in remote index part.

2. Create local mark file.

3. Delete local files except metadata (it is simpler this way, to be

able to reuse timeline initialization code that expects metadata)

4. Delete remote layers

5. Delete index part

6. Delete meta, timeline directory.

7. Delete mark file.

This works for local only configuration without remote storage.

Sequence is resumable from any point.

resolves#4453

resolves https://github.com/neondatabase/neon/pull/4552 (the issue was

created with async cancellation in mind, but we can still have issues

with retries if metadata is deleted among the first by remove_dir_all

(which doesnt have any ordering guarantees))

---------

Co-authored-by: Joonas Koivunen <joonas@neon.tech>

Co-authored-by: Christian Schwarz <christian@neon.tech>

This PR adds support for non-interactive transaction query endpoint.

It accepts an array of queries and parameters and returns an array of

query results. The queries will be run in a single transaction one

after another on the proxy side.

count only once; on startup create the counter right away because we

will not observe any changes later.

small, probably never reachable from outside fix for #4796.

We currently have a timeseries for each of the tenants in different

states. We only want this for Broken. Other states could be counters.

Fix this by making the `pageserver_tenant_states_count` a counter

without a `tenant_id` and

add a `pageserver_broken_tenants_count` which has a `tenant_id` label,

each broken tenant being 1.

Given now we've refactored `connect_to_compute` as a generic, we can

test it with mock backends. In this PR, we mock the error API and

connect_once API to test the retry behavior of `connect_to_compute`. In

the next PR, I'll add mock for credentials so that we can also test

behavior with `wake_compute`.

ref https://github.com/neondatabase/neon/issues/4709

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

In #4743, we'd like to convert the read path to use `async` rust. In

preparation of that, this PR switches some functions that are calling

lower level functions like `BlockReader::read_blk`,

`BlockCursor::read_blob`, etc into `async`. The PR does not switch all

functions however, and only focuses on the ones which are easy to

switch.

This leaves around some async functions that are (currently)

unnecessarily `async`, but on the other hand it makes future changes

smaller in diff.

Part of #4743 (but does not completely address it).

Fix mx_offset_to_flags_offset() function

Fixes issue #4774

Postgres `MXOffsetToFlagsOffset` was not correctly converted to Rust

because cast to u16 is done before division by modulo. It is possible

only if divider is power of two.

Add a small rust unit test to check that the function produces same results

as the PostgreSQL macro, and extend the existing python test to cover

this bug.

Co-authored-by: Konstantin Knizhnik <knizhnik@neon.tech>

Co-authored-by: Heikki Linnakangas <heikki@neon.tech>

## Problem

close https://github.com/neondatabase/neon/issues/4712

## Summary of changes

Previously, when flushing frozen layers, it was split into two

operations: add delta layer to disk + remove frozen layer from memory.

This would cause a short period of time where we will have the same data

both in frozen and delta layer. In this PR, we merge them into one

atomic operation in layer map manager, therefore simplifying the code.

Note that if we decide to create image layers for L0 flush, it will

still be split into two operations on layer map.

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

Co-authored-by: Joonas Koivunen <joonas@neon.tech>

As seen in staging logs with some massive compactions

(create_image_layer), in addition to racing with compaction or gc or

even between two invocations to `evict_layer_batch`.

Cc: #4745Fixes: #3851 (organic tech debt reduction)

Solution is not to log the Not Found in such cases; it is perfectly

natural to happen. Route to this is quite long, but implemented two

cases of "race between two eviction processes" which are like our disk

usage based eviction and eviction_task, both have the separate "lets

figure out what to evict" and "lets evict" phases.

Removes a bunch of cases which used `tokio::select` to emulate the

`tokio::time::timeout` function. I've done an additional review on the

cancellation safety of these futures, all of them seem to be

cancellation safe (not that `select!` allows non-cancellation-safe

futures, but as we touch them, such a review makes sense).

Furthermore, I correct a few mentions of a non-existent

`tokio::timeout!` macro in the docs to the `tokio::time::timeout`

function.

Adds in a barrier for the duration of the `Tenant::shutdown`.

`pageserver_shutdown` will join this await, `detach`es and `ignore`s

will not.

Fixes#4429.

---------

Co-authored-by: Christian Schwarz <christian@neon.tech>

Updated the description that appears for hnsw when you query extensions:

```

neondb=> SELECT * FROM pg_available_extensions WHERE name = 'hnsw';

name | default_version | installed_version | comment

----------------------+-----------------+-------------------+--------------------------------------------------

hnsw | 0.1.0 | | ** Deprecated ** Please use pg_embedding instead

(1 row)

```

---------

Co-authored-by: Alexander Bayandin <alexander@neon.tech>

## Problem

We're going to reset S3 buckets for extensions

(https://github.com/neondatabase/aws/pull/413), and as soon as we're

going to change the format we store extensions on S3. Let's stop

uploading extensions in the old format.

## Summary of changes

- Disable `aws s3 cp` step for extensions

Compute now uses special safekeeper WAL service port allowing auth tokens with

only tenant scope. Adds understanding of this port to neon_local and fixtures,

as well as test of both ports behaviour with different tokens.

ref https://github.com/neondatabase/neon/issues/4730

## Problem

We use a patched version of `sharded-slab` with increased MAX_THREADS

[1]. It is not required anymore because safekeepers are async now.

A valid comment from the original PR tho [1]:

> Note that patch can affect other rust services, not only the

safekeeper binary.

- [1] https://github.com/neondatabase/neon/pull/4122

## Summary of changes

- Remove patch for `sharded-slab`

## Problem

Second half of #4699. we were maintaining 2 implementations of

handle_client.

## Summary of changes

Merge the handle_client code, but abstract some of the details.

## Checklist before requesting a review

- [X] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

## Problem

Benchmarks run takes about an hour on main branch (in a single job),

which delays pipeline results. And it takes another hour if we want to

restart the job due to some failures.

## Summary of changes

- Use `pytest-split` plugin to run benchmarks on separate CI runners in

4 parallel jobs

- Add `scripts/benchmark_durations.py` for getting benchmark durations

from the database to help `pytest-split` schedule tests more evenly. It

uses p99 for the last 10 days' results (durations).

The current distribution could be better; each worker's durations vary

from 9m to 35m, but this could be improved in consequent PRs.

## Problem

10 retries * 10 second timeouts makes for a very long retry window.

## Summary of changes

Adds a 2s timeout to sql_over_http connections, and also reduces the 10s

timeout in TCP.

## Problem

Half of #4699.

TCP/WS have one implementation of `connect_to_compute`, HTTP has another

implementation of `connect_to_compute`.

Having both is annoying to deal with.

## Summary of changes

Creates a set of traits `ConnectMechanism` and `ShouldError` that allows

the `connect_to_compute` to be generic over raw TCP stream or

tokio_postgres based connections.

I'm not super happy with this. I think it would be nice to

remove tokio_postgres entirely but that will need a lot more thought to

be put into it.

I have also slightly refactored the caching to use fewer references.

Instead using ownership to ensure the state of retrying is encoded in

the type system.

## Problem

Compactions might generate files of exactly the same name as before

compaction due to our naming of layer files. This could have already

caused some mess in the system, and is known to cause some issues like

https://github.com/neondatabase/neon/issues/4088. Therefore, we now

consider duplicated layers in the post-compaction process to avoid

violating the layer map duplicate checks.

related previous works: close

https://github.com/neondatabase/neon/pull/4094

error reported in: https://github.com/neondatabase/neon/issues/4690,

https://github.com/neondatabase/neon/issues/4088

## Summary of changes

If a file already exists in the layer map before the compaction, do not

modify the layer map and do not delete the file. The file on disk at

that time should be the new one overwritten by the compaction process.

This PR also adds a test case with a fail point that produces exactly

the same set of files.

This bypassing behavior is safe because the produced layer files have

the same content / are the same representation of the original file.

An alternative might be directly removing the duplicate check in the

layer map, but I feel it would be good if we can prevent that in the

first place.

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

Co-authored-by: Konstantin Knizhnik <knizhnik@garret.ru>

Co-authored-by: Heikki Linnakangas <heikki@neon.tech>

Co-authored-by: Joonas Koivunen <joonas@neon.tech>

The `CRITICAL_OPS_BUCKETS` is not useful for getting an accurate

picture of basebackup latency because all the observations

that negatively affect our SLI fall into one bucket, i.e., 100ms-1s.

Use the same buckets as control plane instead.

This should only affect the version of the vm-informant used. The only

change to the vm-informant from v0.12.1 to v0.13.1 was:

* https://github.com/neondatabase/autoscaling/pull/407

Just a typo fix; worth getting in anyways.

## Problem

CI doesn't work for external contributors (for PRs from forks), see

#2222 for more information.

I'm proposing the following:

- External PR is created

- PR is reviewed so that it doesn't contain any malicious code

- Label `approved-for-ci-run` is added to that PR (by the reviewer)

- A new workflow picks up this label and creates an internal branch from

that PR (the branch name is `ci-run/pr-*`)

- CI is run on the branch, but the results are also propagated to the

PRs check

- We can merge a PR itself if it's green; if not — repeat.

## Summary of changes

- Create `approved-for-ci-run.yml` workflow which handles

`approved-for-ci-run` label

- Trigger `build_and_test.yml` and `neon_extra_builds.yml` workflows on

`ci-run/pr-*` branches

## Problem

`cargo +nightly doc` is giving a lot of warnings: broken links, naked

URLs, etc.

## Summary of changes

* update the `proc-macro2` dependency so that it can compile on latest

Rust nightly, see https://github.com/dtolnay/proc-macro2/pull/391 and

https://github.com/dtolnay/proc-macro2/issues/398

* allow the `private_intra_doc_links` lint, as linking to something

that's private is always more useful than just mentioning it without a

link: if the link breaks in the future, at least there is a warning due

to that. Also, one might enable

[`--document-private-items`](https://doc.rust-lang.org/cargo/commands/cargo-doc.html#documentation-options)

in the future and make these links work in general.

* fix all the remaining warnings given by `cargo +nightly doc`

* make it possible to run `cargo doc` on stable Rust by updating

`opentelemetry` and associated crates to version 0.19, pulling in a fix

that previously broke `cargo doc` on stable:

https://github.com/open-telemetry/opentelemetry-rust/pull/904

* Add `cargo doc` to CI to ensure that it won't get broken in the

future.

Fixes#2557

## Future work

* Potentially, it might make sense, for development purposes, to publish

the generated rustdocs somewhere, like for example [how the rust

compiler does

it](https://doc.rust-lang.org/nightly/nightly-rustc/rustc_driver/index.html).

I will file an issue for discussion.

Handle test failures like:

```

AssertionError: assert not ['$ts WARN delete_timeline{tenant_id=X timeline_id=Y}: About to remove 1 files\n']

```

Instead of logging:

```

WARN delete_timeline{tenant_id=X timeline_id=Y}: Found 1 files not bound to index_file.json, proceeding with their deletion

WARN delete_timeline{tenant_id=X timeline_id=Y}: About to remove 1 files

```

For each one operation of timeline deletion, list all unref files with

`info!`, and then continue to delete them with the added spice of

logging the rare/never happening non-utf8 name with `warn!`.

Rationale for `info!` instead of `warn!`: this is a normal operation;

like we had mentioned in `test_import.py` -- basically whenever we

delete a timeline which is not idle.

Rationale for N * (`ìnfo!`|`warn!`): symmetry for the layer deletions;

if we could ever need those, we could also need these for layer files

which are not yet mentioned in `index_part.json`.

---------

Co-authored-by: Christian Schwarz <christian@neon.tech>

Tests cannot be ran without configuring tracing. Split from #4678.

Does not nag about the span checks when there is no subscriber

configured, because then the spans will have no links and nothing can be

checked. Sadly the `SpanTrace::status()` cannot be used for this.

`tracing` is always configured in regress testing (running with

`pageserver` binary), which should be enough.

Additionally cleans up the test code in span checks to be in the test

code. Fixes a `#[should_panic]` test which was flaky before these

changes, but the `#[should_panic]` hid the flakyness.

Rationale for need: Unit tests might not be testing only the public or

`feature="testing"` APIs which are only testable within `regress` tests

so not all spans might be configured.

## Problem

ref https://github.com/neondatabase/neon/issues/4373

## Summary of changes

A step towards immutable layer map. I decided to finish the refactor

with this new approach and apply

https://github.com/neondatabase/neon/pull/4455 on this patch later.

In this PR, we moved all modifications of the layer map to one place

with semantic operations like `initialize_local_layers`,

`finish_compact_l0`, `finish_gc_timeline`, etc, which is now part

of `LayerManager`. This makes it easier to build new features upon

this PR:

* For immutable storage state refactor, we can simply replace the layer

map with `ArcSwap<LayerMap>` and remove the `layers` lock. Moving

towards it requires us to put all layer map changes in a single place as

in https://github.com/neondatabase/neon/pull/4455.

* For manifest, we can write to manifest in each of the semantic

functions.

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

Co-authored-by: Christian Schwarz <christian@neon.tech>

If database was created with `is_template true` Postgres doesn't allow

dropping it right away and throws error

```

ERROR: cannot drop a template database

```

so we have to unset `is_template` first.

Fixing it, I noticed that our `escape_literal` isn't exactly correct

and following the same logic as in `quote_literal_internal`, we need to

prepend string with `E`. Otherwise, it's not possible to filter

`pg_database` using `escape_literal()` result if name contains `\`, for

example.

Also use `FORCE` to drop database even if there are active connections.

We run this from `cloud_admin`, so it should have enough privileges.

NB: there could be other db states, which prevent us from dropping

the database. For example, if db is used by any active subscription

or logical replication slot.

TODO: deal with it once we allow logical replication. Proper fix should

involve returning an error code to the control plane, so it could

figure out that this is a non-retryable error, return it to the user and

mark operation as permanently failed.

Related to neondatabase/cloud#4258

## Problem

In the logs, I noticed we still weren't retrying in some cases. Seemed

to be timeouts but we explicitly wanted to handle those

## Summary of changes

Retry on io::ErrorKind::TimedOut errors.

Handle IO errors in tokio_postgres::Error.

## Problem

It took me a while to understand the purpose of all the tasks spawned in

the main functions.

## Summary of changes

Utilising the type system and less macros, plus much more comments,

document the shutdown procedure of each task in detail

Recently we started doing sync-safekeepers before exiting compute_ctl,

expecting that it will make next sync faster by skipping recovery. But

recovery is still running in some cases

(https://github.com/neondatabase/neon/pull/4574#issuecomment-1629256166)

because of the lagging truncateLsn. This PR should help with updating

truncateLsn.

This addresses the issue in #4526 by adding a test that reproduces the

race condition that gave rise to the bug (or at least *a* race condition

that gave rise to the same error message), and then implementing a fix

that just prints a message to the log if a file could not been found for

uploading. Even though the underlying race condition is not fixed yet,

this will un-block the upload queue in that situation, greatly reducing

the impact of such a (rare) race.

Fixes#4526.

## Problem

Postgres submodule can be changed unintentionally, and these changes are

easy to miss during the review.

Adding a check that should prevent this from happening, the check fails

`build-neon` job with the following message:

```

Expected postgres-v14 rev to be at '1414141414141414141414141414141414141414', but it is at '1144aee1661c79eec65e784a8dad8bd450d9df79'

Expected postgres-v15 rev to be at '1515151515151515151515151515151515151515', but it is at '1984832c740a7fa0e468bb720f40c525b652835d'

Please update vendors/revisions.json if these changes are intentional.

```

This is an alternative approach to

https://github.com/neondatabase/neon/pull/4603

## Summary of changes

- Add `vendor/revisions.json` file with expected revisions

- Add built-time check (to `build-neon` job) that Postgres submodules

match revisions from `vendor/revisions.json`

- A couple of small improvements for logs from

https://github.com/neondatabase/neon/pull/4603

- Fixed GitHub autocomment for no tests was run case

---------

Co-authored-by: Joonas Koivunen <joonas@neon.tech>

The comment referenced an issue that was already closed. Remove that

reference and replace it with an explanation why we already don't print

an error.

See discussion in

https://github.com/neondatabase/neon/issues/2934#issuecomment-1626505916

For the TOCTOU fixes, the two calls after the `.exists()` both didn't

handle the situation well where the file was deleted after the initial

`.exists()`: one would assume that the path wasn't a file, giving a bad

error, the second would give an accurate error but that's not wanted

either.

We remove both racy `exists` and `is_file` checks, and instead just look

for errors about files not being found.

## Problem

If we fail to wake up the compute node, a subsequent connect attempt

will definitely fail. However, kubernetes won't fail the connection

immediately, instead it hangs until we timeout (10s).

## Summary of changes

Refactor the loop to allow fast retries of compute_wake and to skip a

connect attempt.

## Problem

Binaries created from PRs (both in docker images and for tests) have

wrong git-env versions, they point to phantom merge commits.

## Summary of changes

- Prefer GIT_VERSION env variable even if git information was accessible

- Use `${{ github.event.pull_request.head.sha || github.sha }}` instead

of `${{ github.sha }}` for `GIT_VERSION` in workflows

So the builds will still happen from this phantom commit, but we will

report the PR commit.

---------

Co-authored-by: Joonas Koivunen <joonas@neon.tech>

## Problem

part of https://github.com/neondatabase/neon/pull/4340

## Summary of changes

Remove LayerDescriptor and remove `todo!`. At the same time, this PR

adds `AsLayerDesc` trait for all persistent layers and changed

`LayerFileManager` to have a generic type. For tests, we are now using

`LayerObject`, which is a wrapper around `PersistentLayerDesc`.

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

Before this PR, during shutdown, we'd find naked logs like this one for every active page service connection:

```

2023-07-05T14:13:50.791992Z INFO shutdown request received in run_message_loop

```

This PR

1. adds a peer_addr span field to distinguish the connections in logs

2. sets the tenant_id / timeline_id fields earlier

It would be nice to have `tenant_id` and `timeline_id` directly on

the `page_service_conn_main` span (empty, initially), then set

them at the top of `process_query`.

The problem is that the debug asserts for `tenant_id` and

`timeline_id` presence in the tracing span doesn't support

detecting empty values [1].

So, I'm a bit hesitant about over-using `Span::record`.

[1] https://github.com/neondatabase/neon/issues/4676

We see the following log lines occasionally in prod:

```

kill_and_wait_impl{pid=1983042}: wait successful exit_status=signal: 9 (SIGKILL)

```

This PR makes it easier to find the tenant for the pid, by including the

tenant id as a field in the span.

It started from few config methods that have various orderings and

sometimes use references sometimes not. So I unified path manipulation

methods to always order tenant_id before timeline_id and use referenced

because we dont need owned values.

Similar changes happened to call-sites of config methods.

I'd say its a good idea to always order tenant_id before timeline_id so

it is consistent across the whole codebase.

## Problem

`docker build ... -f Dockerfile.compute-node ...` fails on ARM (I'm

checking on macOS).

## Summary of changes

- Download the arm version of cmake on arm

## Problem

#4598 compute nodes are not accessible some time after wake up due to

kubernetes DNS not being fully propagated.

## Summary of changes

Update connect retry mechanism to support handling IO errors and

sleeping for 100ms

## Checklist before requesting a review

- [x] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Problem

I was reading the code of the page server today and found these minor

things that I thought could be cleaned up.

## Summary of changes

* remove a redundant indentation layer and continue in the flushing loop

* use the builtin `PartialEq` check instead of hand-rolling a `range_eq`

function

* Add a missing `>` to a prominent doc comment

General Rust Write trait semantics (as well as its async brother) is that write

definitely happens only after Write::flush(). This wasn't needed in sync where

rust write calls the syscall directly, but is required in async.

Also fix setting initial end_pos in walsender, sometimes it was from the future.

fixes https://github.com/neondatabase/neon/issues/4518

## Problem

Compute is not always able to reconnect to pages server.

First of all it caused by long time of restart of pageserver.

So number of attempts is increased from 5 (hardcoded) to 60 (GUC).

Also we do not perform flush after each command to increase performance

(it is especially critical for prefetch).

Unfortunately such pending flush makes it not possible to transparently

reconnect to restarted pageserver.

What we can do is to try to minimzie such probabilty.

Most likely broken connection will be detected in first sens command

after some idle period.

This is why max_flush_delay parameter is added which force flush to be

performed after first request after some idle period.

See #4497

## Summary of changes

Add neon.max_reconnect_attempts and neon.max_glush_delay GUCs which

contol when flush has to be done

and when it is possible to try to reconnect to page server

## Checklist before requesting a review

- [ ] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

## Problem

- Running the command according to docker.md gives warning and error.

- Warning `permissions should be u=rw (0600) or less` is output when

executing `psql -h localhost -p 55433 -U cloud_admin`.

- `FATAL: password authentication failed for user "root”` is output in

compute logs.

## Summary of changes

- Add `$ chmod 600 ~/.pgpass` in docker.md to avoid warning.

- Add username (cloud_admin) to pg_isready command in docker-compose.yml

to avoid error.

---------

Co-authored-by: Tomoka Hayashi <tomoka.hayashi@ntt.com>

```

CREATE EXTENSION embedding;

CREATE TABLE t (val real[]);

INSERT INTO t (val) VALUES ('{0,0,0}'), ('{1,2,3}'), ('{1,1,1}'), (NULL);

CREATE INDEX ON t USING hnsw (val) WITH (maxelements = 10, dims=3, m=3);

INSERT INTO t (val) VALUES (array[1,2,4]);

SELECT * FROM t ORDER BY val <-> array[3,3,3];

val

---------

{1,2,3}

{1,2,4}

{1,1,1}

{0,0,0}

(5 rows)

```

Does three things:

* add a `Display` impl for `LayerFileName` equal to the `short_id`

* based on that, replace the `Layer::short_id` function by a requirement

for a `Display` impl

* use that `Display` impl in the places where the `short_id` and `file_name()` functions were used instead

Fixes#4145

Looking at logs from staging and prod, I found there are a bunch of log

lines without tenant / timeline context.

Manully walk through all task_mgr::spawn lines and fix that using the

least amount of work required.

While doing it, remove some redundant `shutting down` messages.

refs https://github.com/neondatabase/neon/issues/4222

After announcing `hnsw`, there is a hypothesis that the community will

start comparing it with `pgvector` by themselves. Therefore, let's have

an actual version of `pgvector` in Neon.

Cache up to 20 connections per endpoint. Once all pooled connections

are used current implementation can open an extra connection, so the

maximum number of simultaneous connections is not enforced.

There are more things to do here, especially with background clean-up

of closed connections, and checks for transaction state. But current

implementation allows to check for smaller coonection latencies that

this cache should bring.

## Problem

HNSW index is created in memory.

Try to prevent OOM by checking of available RAM.

## Summary of changes

## Checklist before requesting a review

- [ ] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

---------

Co-authored-by: Konstantin Knizhnik <knizhnik@neon.tech>

In case we try to connect to an outdated address that is no longer valid, the

default behavior of Kubernetes is to drop the packets, causing us to wait for

the entire timeout period. We want to fail fast in such cases.

A specific case to consider is when we have cached compute node information

with a 5-minute TTL (Time To Live), but the user has executed a `/suspend` API

call, resulting in the nonexistence of the compute node.

## Problem

While pbkdf2 is a simple algorithm, we should probably use a well tested

implementation

## Summary of changes

* Use pbkdf2 crate

* Use arrays like the hmac comment says

## Checklist before requesting a review

- [X] I have performed a self-review of my code.

- [X] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

This renames the `pageserver_tenant_synthetic_size` metric to

`pageserver_tenant_synthetic_cached_size_bytes`, as was requested on

slack (link in the linked issue).

* `_cached` to hint that it is not incrementally calculated

* `_bytes` to indicate the unit the size is measured in

Fixes#3748

## Problem

1. The local endpoints provision 2 ports (postgres and HTTP) which means

the migration_check endpoint has a different port than what the setup

implies

2. psycopg2-binary 2.9.3 has a deprecated poetry config and doesn't

install.

## Summary of changes

Update psycopg2-binary and update the endpoint ports in the readme

---------

Co-authored-by: Alexander Bayandin <alexander@neon.tech>

Allure does not support ansi colored logs, yet `compute_ctl` has them.

Upgrade criterion to get rid of atty dependency, disable ansi colors,

remove atty dependency and disable ansi feature of tracing-subscriber.

This is a heavy-handed approach. I am not aware of a workflow where

you'd want to connect a terminal directly to for example `compute_ctl`,

usually you find the logs in a file. If someone had been using colors,

they will now need to:

- turn the `tracing-subscriber.default-features` to `true`

- edit their wanted project to have colors

I decided to explicitly disable ansi colors in case we would have in

future a dependency accidentally enabling the feature on

`tracing-subscriber`, which would be quite surprising but not

unimagineable.

By getting rid of `atty` from dependencies we get rid of

<https://github.com/advisories/GHSA-g98v-hv3f-hcfr>.

## Problem

All tests have already been parametrised by Postgres version and build

type (to have them distinguishable in the Allure report), but despite

it, it's anyway required to have DEFAULT_PG_VERSION and BUILD_TYPE env

vars set to corresponding values, for example to

run`test_timeline_deletion_with_files_stuck_in_upload_queue[release-pg14-local_fs]`

test it's required to set `DEFAULT_PG_VERSION=14` and

`BUILD_TYPE=release`.

This PR makes the test framework pick up parameters from the test name

itself.

## Summary of changes

- Postgres version and build type related fixtures now are

function-scoped (instead of being sessions scoped before)

- Deprecate `--pg-version` argument in favour of DEFAULT_PG_VERSION env

variable (it's easier to parse)

- GitHub autocomment now includes only one command with all the failed

tests + runs them in parallel

## Problem

#4528

## Summary of changes

Add a 60 seconds default timeout to the reqwest client

Add retries for up to 3 times to call into the metric consumption

endpoint

---------

Co-authored-by: Christian Schwarz <christian@neon.tech>

Prod logs have deep accidential span nesting. Introduced in #3759 and

has been untouched since, maybe no one watches proxy logs? :) I found it

by accident when looking to see if proxy logs have ansi colors with

`{neon_service="proxy"}`.

The solution is to mostly stop using `Span::enter` or `Span::entered` in

async code. Kept on `Span::entered` in cancel on shutdown related path.

## Problem

Message "set local file cache limit to..." polutes compute logs.

## Summary of changes

## Checklist before requesting a review

- [ ] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

Co-authored-by: Konstantin Knizhnik <knizhnik@neon.tech>

## Problem

part of https://github.com/neondatabase/neon/issues/4392, continuation

of https://github.com/neondatabase/neon/pull/4408

## Summary of changes

This PR removes all layer objects from LayerMap and moves it to the

timeline struct. In timeline struct, LayerFileManager maps a layer

descriptor to a layer object, and it is stored in the same RwLock as

LayerMap to avoid behavior difference.

Key changes:

* LayerMap now does not have generic, and only stores descriptors.

* In Timeline, we add a new struct called layer mapping.

* Currently, layer mapping is stored in the same lock with layer map.

Every time we retrieve data from the layer map, we will need to map the

descriptor to the actual object.

* Replace_historic is moved to layer mapping's replace, and the return

value behavior is different from before. I'm a little bit unsure about

this part and it would be good to have some comments on that.

* Some test cases are rewritten to adapt to the new interface, and we

can decide whether to remove it in the future because it does not make

much sense now.

* LayerDescriptor is moved to `tests` module and should only be intended

for unit testing / benchmarks.

* Because we now have a usage pattern like "take the guard of lock, then

get the reference of two fields", we want to avoid dropping the

incorrect object when we intend to unlock the lock guard. Therefore, a

new set of helper function `drop_r/wlock` is added. This can be removed

in the future when we finish the refactor.

TODOs after this PR: fully remove RemoteLayer, and move LayerMapping to

a separate LayerCache.

all refactor PRs:

```

#4437 --- #4479 ------------ #4510 (refactor done at this point)

\-- #4455 -- #4502 --/

```

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

There is a magic number about how often we repartition and therefore

affecting how often we compact. This PR makes this number `10` a global

constant and add docs.

---------

Signed-off-by: Alex Chi Z <chi@neon.tech>

## Problem

We want to have a number of custom extensions that will not be available

by default, as an example of such is [Postgres

Anonymizer](https://postgresql-anonymizer.readthedocs.io/en/stable/)

(please note that the extension should be added to

`shared_preload_libraries`). To distinguish them, custom extensions

should be added to a different S3 path:

```

s3://<bucket>/<release version>/<postgres_version>/<ext_name>/share/extensions/

s3://<bucket>/<release version>/<postgres_version>/<ext_name>/lib

where <ext_name> is an extension name

```

Resolves https://github.com/neondatabase/neon/issues/4582

## Summary of changes

- Add Postgres Anonymizer extension to Dockerfile (it's included only to

postgres-extensions target)

- Build extensions image from postgres-extensions target in a workflow

- Upload custom extensions to S3 (different directory)

When we use local SSD for bench and create `.neon` directory before we

do `cargo neon init`, the initialization process will error due to

directory already exists. This PR adds a flag `--force` that removes

everything inside the directory if `.neon` already exists.

---------

Signed-off-by: Alex Chi Z. <chi@neon.tech>

## Problem

See #4516

Inspecting log it is possible to notice that if lwlsn is set to the

beginning of applied WAL record, then

incorrect version of the page is loaded:

```

2023-06-27 18:36:51.930 GMT [3273945] CONTEXT: WAL redo at 0/14AF6F0 for Heap/INSERT: off 2 flags 0x01; blkref #0: rel 1663/5/1259, blk 0 FPW

2023-06-27 18:36:51.930 GMT [3273945] LOG: Do REDO block 0 of rel 1663/5/1259 fork 0 at LSN 0/**014AF6F0**..0/014AFA60

2023-06-27 18:37:02.173 GMT [3273963] LOG: Read blk 0 in rel 1663/5/1259 fork 0 (request LSN 0/**014AF6F0**): lsn=0/**0143C7F8** at character 22

2023-06-27 18:37:47.780 GMT [3273945] LOG: apply WAL record at 0/1BB8F38 xl_tot_len=188, xl_prev=0/1BB8EF8

2023-06-27 18:37:47.780 GMT [3273945] CONTEXT: WAL redo at 0/1BB8F38 for Heap/INPLACE: off 2; blkref #0: rel 1663/5/1259, blk 0

2023-06-27 18:37:47.780 GMT [3273945] LOG: Do REDO block 0 of rel 1663/5/1259 fork 0 at LSN 0/01BB8F38..0/01BB8FF8

2023-06-27 18:37:47.780 GMT [3273945] CONTEXT: WAL redo at 0/1BB8F38 for Heap/INPLACE: off 2; blkref #0: rel 1663/5/1259, blk 0

2023-06-27 18:37:47.780 GMT [3273945] PANIC: invalid lp

```

## Summary of changes

1. Use end record LSN for both cases

2. Update lwlsn for relation metadata

## Checklist before requesting a review

- [ ] I have performed a self-review of my code.

- [ ] If it is a core feature, I have added thorough tests.

- [ ] Do we need to implement analytics? if so did you add the relevant

metrics to the dashboard?

- [ ] If this PR requires public announcement, mark it with

/release-notes label and add several sentences in this section.

## Checklist before merging

- [ ] Do not forget to reformat commit message to not include the above

checklist

## Problem

We want to store Postgres Extensions in S3 (resolves

https://github.com/neondatabase/neon/issues/4493).

Proposed solution:

- Create a separate docker image (from scratch) that contains only

extensions

- Do not include extensions into compute-node (except for neon

extensions)*

- For release and main builds upload extract extension from the image

and upload to S3 (`s3://<bucket>/<release

version>/<postgres_version>/...`)**

*) We're not doing it until the feature is not fully implemented

**) This differs from the initial proposal in

https://github.com/neondatabase/neon/issues/4493 of putting extensions

straight into `s3://<bucket>/<postgres_version>/...`, because we can't

upload directory atomicly. A drawback of this is that we end up with

unnecessary copies of files ~2.1 GB per release (i.e. +2.1 GB for each

commit in main also). We don't really need to update extensions for each

release if there're no relevant changes, but this requires extra work.

## Summary of changes

- Created a separate stage in Dockerfile.compute-node

`postgres-extensions` that contains only extensions

- Added a separate step in a workflow that builds `postgres-extensions`

image (because of a bug in kaniko this step is commented out because it

takes way too long to get built)

- Extract extensions from the image and upload files to S3 for release

and main builds

- Upload extenstions only for staging (for now)

## Problem

Lets keep 500 for unusual stuff that is not considered normal. Came up

during one of the discussions around console logs now seeing this 500's.

## Summary of changes

- Return 409 Conflict instead of 500

- Remove 200 ok status because it is not used anymore

This PR concludes the "async `Layer::get_value_reconstruct_data`"

project.

The problem we're solving is that, before this patch, we'd execute

`Layer::get_value_reconstruct_data` on the tokio executor threads.

This function is IO- and/or CPU-intensive.

The IO is using VirtualFile / std::fs; hence it's blocking.

This results in unfairness towards other tokio tasks, especially under

(disk) load.

Some context can be found at

https://github.com/neondatabase/neon/issues/4154

where I suspect (but can't prove) load spikes of logical size

calculation to

cause heavy eviction skew.

Sadly we don't have tokio runtime/scheduler metrics to quantify the

unfairness.

But generally, we know blocking the executor threads on std::fs IO is

bad.

So, let's have this change and watch out for severe perf regressions in

staging & during rollout.

## Changes

* rename `Layer::get_value_reconstruct_data` to

`Layer::get_value_reconstruct_data_blocking`

* add a new blanket impl'd `Layer::get_value_reconstruct_data`

`async_trait` method that runs `get_value_reconstruct_data_blocking`

inside `spawn_blocking`.

* The `spawn_blocking` requires `'static` lifetime of the captured

variables; hence I had to change the data flow to _move_ the

`ValueReconstructState` into and back out of get_value_reconstruct_data

instead of passing a reference. It's a small struct, so I don't expect a

big performance penalty.

## Performance

Fundamentally, the code changes cause the following performance-relevant

changes:

* Latency & allocations: each `get_value_reconstruct_data` call now

makes a short-lived allocation because `async_trait` is just sugar for

boxed futures under the hood

* Latency: `spawn_blocking` adds some latency because it needs to move

the work to a thread pool

* using `spawn_blocking` plus the existing synchronous code inside is

probably more efficient better than switching all the synchronous code

to tokio::fs because _each_ tokio::fs call does `spawn_blocking` under

the hood.

* Throughput: the `spawn_blocking` thread pool is much larger than the

async executor thread pool. Hence, as long as the disks can keep up,

which they should according to AWS specs, we will be able to deliver

higher `get_value_reconstruct_data` throughput.

* Disk IOPS utilization: we will see higher disk utilization if we get

more throughput. Not a problem because the disks in prod are currently

under-utilized, according to node_exporter metrics & the AWS specs.

* CPU utilization: at higher throughput, CPU utilization will be higher.

Slightly higher latency under regular load is acceptable given the

throughput gains and expected better fairness during disk load peaks,

such as logical size calculation peaks uncovered in #4154.

## Full Stack Of Preliminary PRs

This PR builds on top of the following preliminary PRs

1. Clean-ups

* https://github.com/neondatabase/neon/pull/4316

* https://github.com/neondatabase/neon/pull/4317

* https://github.com/neondatabase/neon/pull/4318

* https://github.com/neondatabase/neon/pull/4319

* https://github.com/neondatabase/neon/pull/4321

* Note: these were mostly to find an alternative to #4291, which I

thought we'd need in my original plan where we would need to convert

`Tenant::timelines` into an async locking primitive (#4333). In reviews,

we walked away from that, but these cleanups were still quite useful.

2. https://github.com/neondatabase/neon/pull/4364

3. https://github.com/neondatabase/neon/pull/4472

4. https://github.com/neondatabase/neon/pull/4476

5. https://github.com/neondatabase/neon/pull/4477

6. https://github.com/neondatabase/neon/pull/4485

7. https://github.com/neondatabase/neon/pull/4441

The stats for `compact_level0_phase` that I added in #4527 show the

following breakdown (24h data from prod, only looking at compactions

with > 1 L1 produced):

* 10%ish of wall-clock time spent between the two read locks

* I learned that the `DeltaLayer::iter()` and `DeltaLayer::key_iter()`

calls actually do IO, even before we call `.next()`. I suspect that is

why they take so much time between the locks.

* 80+% of wall-clock time spent writing layer files

* Lock acquisition time is irrelevant (low double-digit microseconds at

most)

* The generation of the holes holds the read lock for a relatively long

time and it's proportional to the amount of keys / IO required to

iterate over them (max: 110ms in prod; staging (nightly benchmarks):

multiple seconds).

Find below screenshots from my ad-hoc spreadsheet + some graphs.

<img width="1182" alt="image"

src="https://github.com/neondatabase/neon/assets/956573/81398b3f-6fa1-40dd-9887-46a4715d9194">

<img width="901" alt="image"

src="https://github.com/neondatabase/neon/assets/956573/e4ac0393-f2c1-4187-a5e5-39a8b0c394c9">

<img width="210" alt="image"

src="https://github.com/neondatabase/neon/assets/956573/7977ade7-6aa5-4773-a0a2-f9729aecee0d">

## Changes In This PR

This PR makes the following changes:

* rearrange the `compact_level0_phase1` code such that we build the

`all_keys_iter` and `all_values_iter` later than before

* only grab the `Timeline::layers` lock once, and hold it until we've

computed the holes

* run compact_level0_phase1 in spawn_blocking, pre-grabbing the

`Timeline::layers` lock in the async code and passing it in as an

`OwnedRwLockReadGuard`.

* the code inside spawn_blocking drops this guard after computing the

holds

* the `OwnedRwLockReadGuard` requires the `Timeline::layers` to be

wrapped in an `Arc`. I think that's Ok, the locking for the RwLock is

more heavy-weight than an additional pointer indirection.

## Alternatives Considered

The naive alternative is to throw the entire function into

`spawn_blocking`, and use `blocking_read` for `Timeline::layers` access.

What I've done in this PR is better because, with this alternative,

1. while we `blocking_read()`, we'd waste one slot in the spawn_blocking

pool

2. there's deadlock risk because the spawn_blocking pool is a finite

resource

## Metadata

Fixes https://github.com/neondatabase/neon/issues/4492

## Problem

Currently, if a user creates a role, it won't by default have any grants

applied to it. If the compute restarts, the grants get applied. This

gives a very strange UX of being able to drop roles/not have any access

to anything at first, and then once something triggers a config

application, suddenly grants are applied. This removes these grants.

This is follow-up to

```

commit 2252c5c282

Author: Alex Chi Z <iskyzh@gmail.com>

Date: Wed Jun 14 17:12:34 2023 -0400

metrics: convert some metrics to pageserver-level (#4490)

```

The consumption metrics synthetic size worker does logical size

calculation. Logical size calculation currently does synchronous disk

IO. This blocks the MGMT_REQUEST_RUNTIME's executor threads, starving

other futures.

While there's work on the way to move the synchronous disk IO into

spawn_blocking, the quickfix here is to use the BACKGROUND_RUNTIME

instead of MGMT_REQUEST_RUNTIME.

Actually it's not just a quickfix. We simply shouldn't be blocking

MGMT_REQUEST_RUNTIME executor threads on CPU or sync disk IO.

That work isn't done yet, as many of the mgmt tasks still _do_ disk IO.

But it's not as intensive as the logical size calculations that we're

fixing here.

While we're at it, fix disk-usage-based eviction in a similar way. It

wasn't the culprit here, according to prod logs, but it can

theoretically be a little CPU-intensive.

More context, including graphs from Prod:

https://neondb.slack.com/archives/C03F5SM1N02/p1687541681336949

Doc says that it should be added into `shared_preload_libraries`, but,

practically, it's not required.

```

postgres=# create extension pg_uuidv7;

CREATE EXTENSION

postgres=# SELECT uuid_generate_v7();

uuid_generate_v7

--------------------------------------

0188e823-3f8f-796c-a92c-833b0b2d1746

(1 row)

```

The histogram distinguishes by ok/err.

I took the liberty to create a small abstraction for such use cases.

It helps keep the label values inside `metrics.rs`, right next

to the place where the metric and its labels are declared.

## Problem

A git tag for a release has an extra `release-` prefix (it looks like

`release-release-3439`).

## Summary of changes

- Do not add `release-` prefix when create git tag

## Problem

In the test environment vacuum duration fluctuates from ~1h to ~5h, along

with another two 1h benchmarks (`select-only` and `simple-update`) it

could be up to 7h which is longer than 6h timeout.

## Summary of changes

- Increase timeout for pgbench-compare job to 8h

- Remove 6h timeouts from Nightly Benchmarks (this is a default value)

* `compaction_threshold` should be an integer, not a string.

* uncomment `[section]` so that if a user needs to modify the config,

they can simply uncomment the corresponding line. Otherwise it's easy

for us to forget uncommenting the `[section]` when uncommenting the

config item we want to configure.

Signed-off-by: Alex Chi <iskyzh@gmail.com>

Commit

```

commit 472cc17b7a

Author: Dmitry Rodionov <dmitry@neon.tech>

Date: Thu Jun 15 17:30:12 2023 +0300

propagate lock guard to background deletion task (#4495)

```

did a drive-by fix, but, the drive-by had a typo.

```

gc_loop{tenant_id=2e2f2bff091b258ac22a4c4dd39bd25d}:update_gc_info{timline_id=837c688fd37c903639b9aa0a6dd3f1f1}:download_remote_layer{layer=000000000000000000000000000000000000-FFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFFF__00000000024DA0D1-000000000443FB51}:panic{thread=background op worker location=pageserver/src/tenant/timeline.rs:4843:25}: missing extractors: ["TimelineId"]

Stack backtrace:

0: utils::logging::tracing_panic_hook

at /libs/utils/src/logging.rs:166:21

1: <alloc::boxed::Box<F,A> as core::ops::function::Fn<Args>>::call

at /rustc/9eb3afe9ebe9c7d2b84b71002d44f4a0edac95e0/library/alloc/src/boxed.rs:2002:9

2: std::panicking::rust_panic_with_hook

at /rustc/9eb3afe9ebe9c7d2b84b71002d44f4a0edac95e0/library/std/src/panicking.rs:692:13

3: std::panicking::begin_panic_handler::{{closure}}

at /rustc/9eb3afe9ebe9c7d2b84b71002d44f4a0edac95e0/library/std/src/panicking.rs:579:13

4: std::sys_common::backtrace::__rust_end_short_backtrace

at /rustc/9eb3afe9ebe9c7d2b84b71002d44f4a0edac95e0/library/std/src/sys_common/backtrace.rs:137:18

5: rust_begin_unwind

at /rustc/9eb3afe9ebe9c7d2b84b71002d44f4a0edac95e0/library/std/src/panicking.rs:575:5

6: core::panicking::panic_fmt

at /rustc/9eb3afe9ebe9c7d2b84b71002d44f4a0edac95e0/library/core/src/panicking.rs:64:14

7: pageserver::tenant::timeline::debug_assert_current_span_has_tenant_and_timeline_id

at /pageserver/src/tenant/timeline.rs:4843:25

8: <pageserver::tenant::timeline::Timeline>::download_remote_layer::{closure#0}::{closure#0}

at /pageserver/src/tenant/timeline.rs:4368:9

9: <tracing::instrument::Instrumented<<pageserver::tenant::timeline::Timeline>::download_remote_layer::{closure#0}::{closure#0}> as core::future::future::Future>::poll

at /.cargo/registry/src/github.com-1ecc6299db9ec823/tracing-0.1.37/src/instrument.rs:272:9

10: <pageserver::tenant::timeline::Timeline>::download_remote_layer::{closure#0}

at /pageserver/src/tenant/timeline.rs:4363:5

11: <pageserver::tenant::timeline::Timeline>::get_reconstruct_data::{closure#0}

at /pageserver/src/tenant/timeline.rs:2618:69

12: <pageserver::tenant::timeline::Timeline>::get::{closure#0}

at /pageserver/src/tenant/timeline.rs:565:13

13: <pageserver::tenant::timeline::Timeline>::list_slru_segments::{closure#0}

at /pageserver/src/pgdatadir_mapping.rs:427:42

14: <pageserver::tenant::timeline::Timeline>::is_latest_commit_timestamp_ge_than::{closure#0}

at /pageserver/src/pgdatadir_mapping.rs:390:13

15: <pageserver::tenant::timeline::Timeline>::find_lsn_for_timestamp::{closure#0}

at /pageserver/src/pgdatadir_mapping.rs:338:17

16: <pageserver::tenant::timeline::Timeline>::update_gc_info::{closure#0}::{closure#0}

at /pageserver/src/tenant/timeline.rs:3967:71

17: <tracing::instrument::Instrumented<<pageserver::tenant::timeline::Timeline>::update_gc_info::{closure#0}::{closure#0}> as core::future::future::Future>::poll

at /.cargo/registry/src/github.com-1ecc6299db9ec823/tracing-0.1.37/src/instrument.rs:272:9

18: <pageserver::tenant::timeline::Timeline>::update_gc_info::{closure#0}

at /pageserver/src/tenant/timeline.rs:3948:5